Snowflake flags enterprise AI governance risks as agentic systems scale

Organizations face mounting pressure to secure data, ensure reliability, and control AI agents as deployment moves from pilots to production

Enterprise adoption of artificial intelligence (AI) is accelerating, but executives and engineers are increasingly confronting a harder reality: scaling AI safely requires far more than models and compute. As organizations move from experimentation to production, governance, data integrity, and operational resilience are emerging as critical risks.

The shift toward “agentic” AI systems — where software agents autonomously query data, trigger workflows, and make decisions — is amplifying those concerns. Without strong controls, fragmented data environments and weak permissions can undermine accuracy, compliance, and trust in AI-driven outcomes.

“There is no AI strategy without a data strategy. If your data is fragmented and you don’t have clear governance policies and permissions, AI is going to have a hard time delivering value the way you expect,” said Christian Kleinerman, EVP, Product Management, Snowflake.

“If your system stops working when a cloud provider fails, it’s not a reliable architecture,” he said.

He said enterprises should design systems that can withstand failures across infrastructure layers, rather than relying on any single provider or environment.

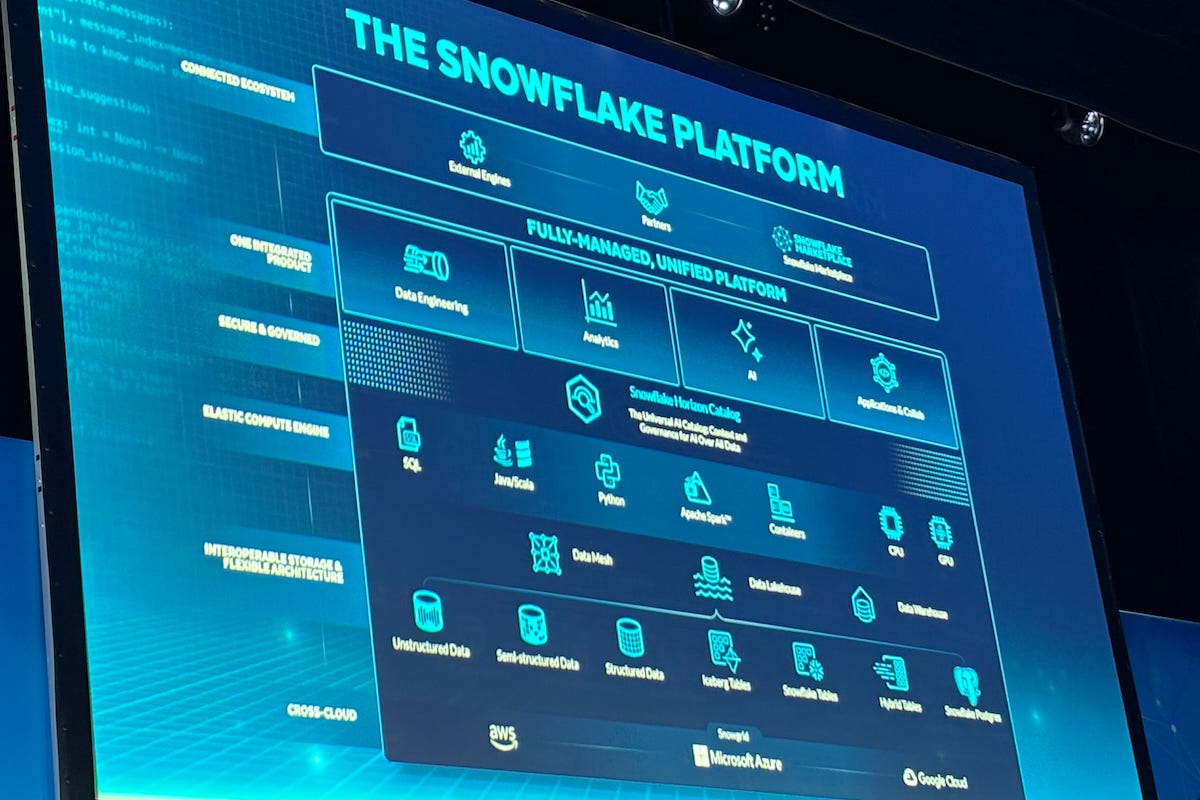

Snowflake is a fully managed, cloud-native data platform that enables organizations to consolidate data from multiple sources into a centralized environment for analytics, machine learning, and AI application development. Its platform handles structured and unstructured data while embedding governance and security controls across the data lifecycle.

Agentic shift

The growing interest in AI agents reflects a broader transition from analytics to action. Instead of generating insights for human interpretation, enterprise systems are increasingly expected to execute decisions directly — from pricing adjustments to customer engagement workflows.

“The goal is to make it easy to build not just agents, but enterprise-grade agents. These need to orchestrate structured data, unstructured search, and third-party tools, while staying within governance and security boundaries,” Kleinerman said.

That requirement is pushing organizations to rethink how data, models, and applications interact. Rather than exporting data to external tools, many enterprises are attempting to keep computation within controlled environments to reduce exposure and maintain auditability.

“A lot of what we do is to bring computation into the security boundary, so your data doesn’t have to be copied out and you don’t have to reinvent permissions and controls,” Kleinerman said.

The risks are not theoretical. As AI systems gain autonomy, errors can propagate faster and on a greater scale. Without clear oversight, enterprises face potential failures in compliance, data accuracy, and operational continuity.

Event context

These issues were a central focus at the Snowflake BUILD: The Dev Conference for AI & Apps, held in London on Feb. 5, 2026. Product leaders and enterprise users outlined how AI deployment is reshaping data infrastructure priorities.

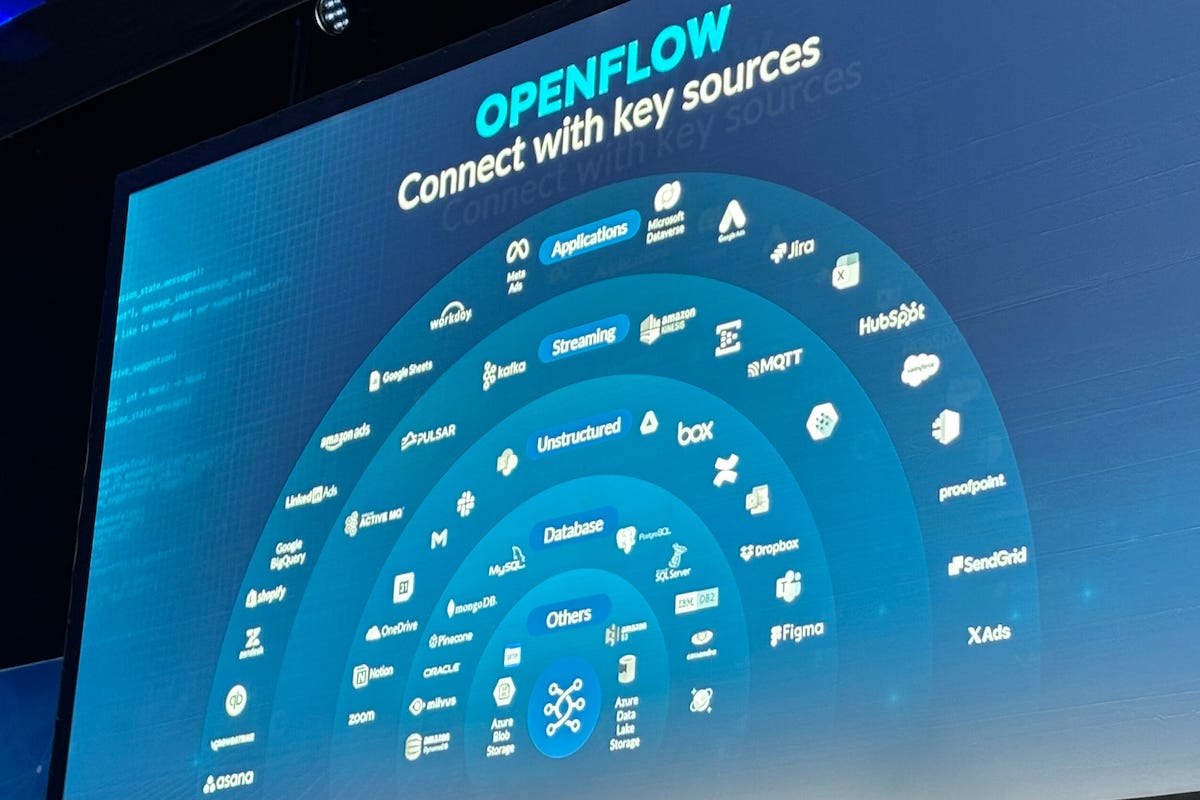

Speakers emphasized that fragmented data environments remain one of the biggest barriers to enterprise AI success. Organizations often struggle to unify structured and unstructured data, enforce consistent permissions, and maintain visibility across systems.

Kleinerman said many companies are already encountering these constraints. AI initiatives frequently stall when data is scattered across platforms or lacks proper governance, limiting the ability to generate reliable insights or automate decisions.

At the same time, developers are under pressure to accelerate delivery. New tooling and AI-assisted workflows are helping reduce development time, but they also introduce new dependencies that must be managed carefully to avoid operational risks.

Effie Goenawan, Principal Product Manager at Snowflake, said enterprise AI adoption is also shifting toward broader business use.

“Business users don’t need to learn SQL or write code. They can just ask questions, but the real challenge is ensuring those answers are grounded in trusted company data,” she said.

“This is a great time to be a builder. Whether you’re working in a graphical user interface (GUI) or a command-line interface (CLI), you now have options to build, deploy pipelines, and create applications much faster,” said Dash Desai, Principal Developer Advocate at Snowflake.

Desai demonstrated how developers can move between integrated development environments (IDEs), command-line tools, and browser-based environments while working on the same data workflows. This flexibility reduces friction in development, but it also requires teams to maintain consistent governance and oversight across multiple toolchains.

Data challenges

“We wanted to build an agent that is grounded and auditable, and that stays within the security and governance of our central data platform. Accuracy and control were critical for us,” said Kiran Kodandoor Nayak, Principal Software Engineer at Booking.com.

“Data teams were constantly getting questions from business users. These were time-consuming and business-critical, and it prevented them from focusing on more strategic work,” he said.

The company introduced AI-driven data agents only after establishing strict controls around how those systems access and interpret data. Continuous auditing and iterative refinement were required to ensure reliability.

“We started small, and as business users tested it, we continuously audited responses to ensure accuracy. That helped us refine our semantic models and improve trust,” Nayak said.

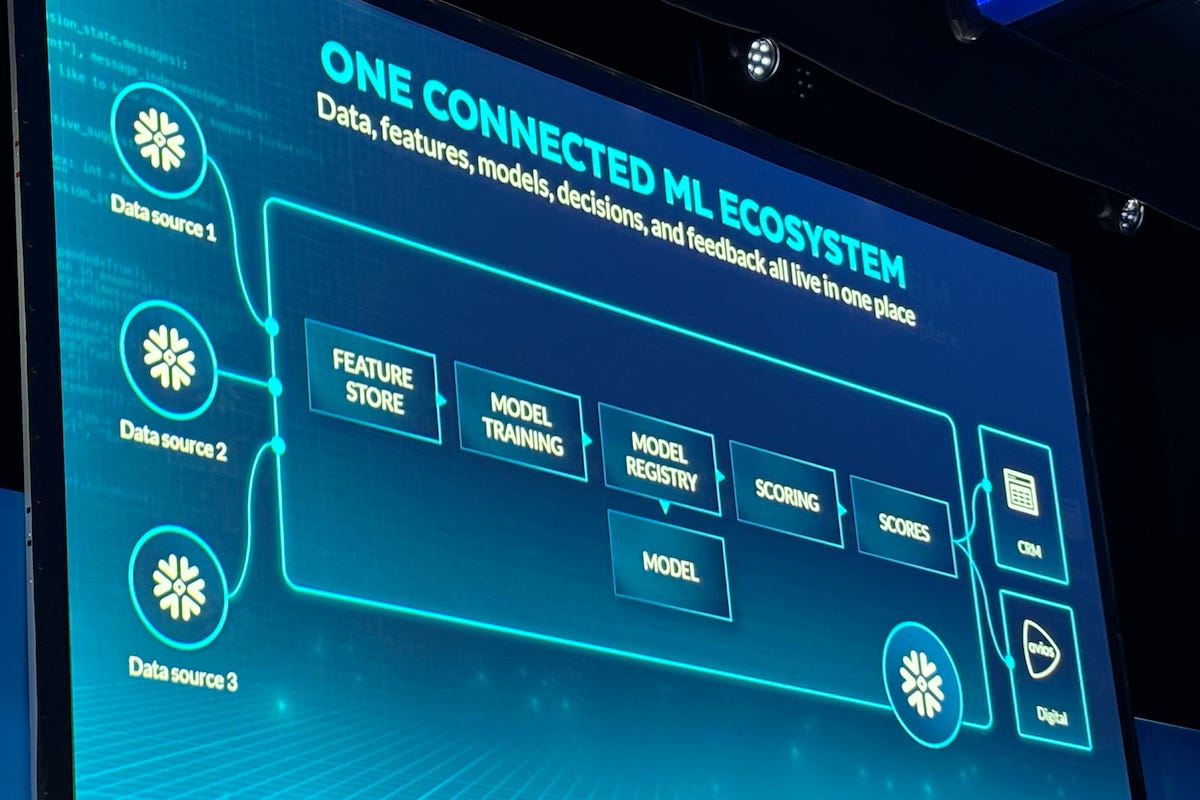

Similar challenges were described by Neha Patel, Lead Machine Learning Engineer at IAG Loyalty, which operates the Avios rewards program.

“Machine learning is used across the entire member lifecycle. We generate huge volumes of data, and our challenge is turning that into personalized actions at scale,” Patel said.

Her team centralized data and machine learning workloads to improve consistency and reduce operational complexity. Bringing models closer to the data allowed them to streamline pipelines and maintain tighter control over outputs.

Operational pressures

As AI systems move into real-time decision-making, operational requirements are becoming more demanding. Applications such as fraud detection, dynamic pricing, and recommendation engines require low-latency responses and continuous monitoring.

“These use cases — pricing, fraud detection, recommendations — require ultra-low latency serving of predictions. That brings a whole new set of use cases within reach,” said Annissa Alusi, Senior Product Manager at Snowflake.

Meeting those demands requires not only faster infrastructure but also stronger governance. Real-time systems must ensure that data is accurate, permissions are enforced, and decisions can be traced and explained.

At the same time, enterprises are seeking to reduce the burden on developers. AI-assisted tools are increasingly used to automate tasks such as pipeline creation, data transformation, and model deployment. While these tools improve productivity, they also require careful oversight to prevent errors from being introduced at scale.

“A lot of what we do is to simplify the experience and remove the heavy lifting, so you can focus on how to get value out of your data instead of managing infrastructure,” Kleinerman said.

Control vs speed

The broader challenge for enterprises is balancing speed with control. AI adoption is often driven by the need to improve efficiency and competitiveness, but rapid deployment can expose weaknesses in data governance and system design.

Organizations are therefore taking a more cautious approach, prioritizing auditability, security, and reliability alongside performance. This includes:

• Centralizing data to reduce fragmentation and improve consistency

• Embedding governance and permissions into every layer of the stack

• Continuously monitoring AI outputs to detect errors and bias

• Designing systems that can withstand failures without disrupting operations

The emergence of agentic AI is likely to intensify these requirements. As systems become more autonomous, enterprises will need to ensure that decisions remain transparent and aligned with business rules.

In practice, this means building AI systems that are not only powerful but also predictable and controllable. The ability to trace decisions, enforce policies, and recover from failures will be critical as AI moves deeper into core business processes.

For many organizations, the path forward will involve incremental deployment — starting with tightly scoped use cases, validating outcomes, and gradually expanding capabilities. Building trust in AI systems is as important as building the systems themselves.

The transition to enterprise AI is no longer a question of capability, but of governance. Those who can manage the risks effectively are likely to gain a significant advantage as AI becomes embedded across the enterprise.