Real-time AI hits millisecond latency as enterprises overhaul ML platforms

Subheading: Low-latency decisioning and integrated governance architectures are becoming essential as organizations deploy machine learning systems at scale

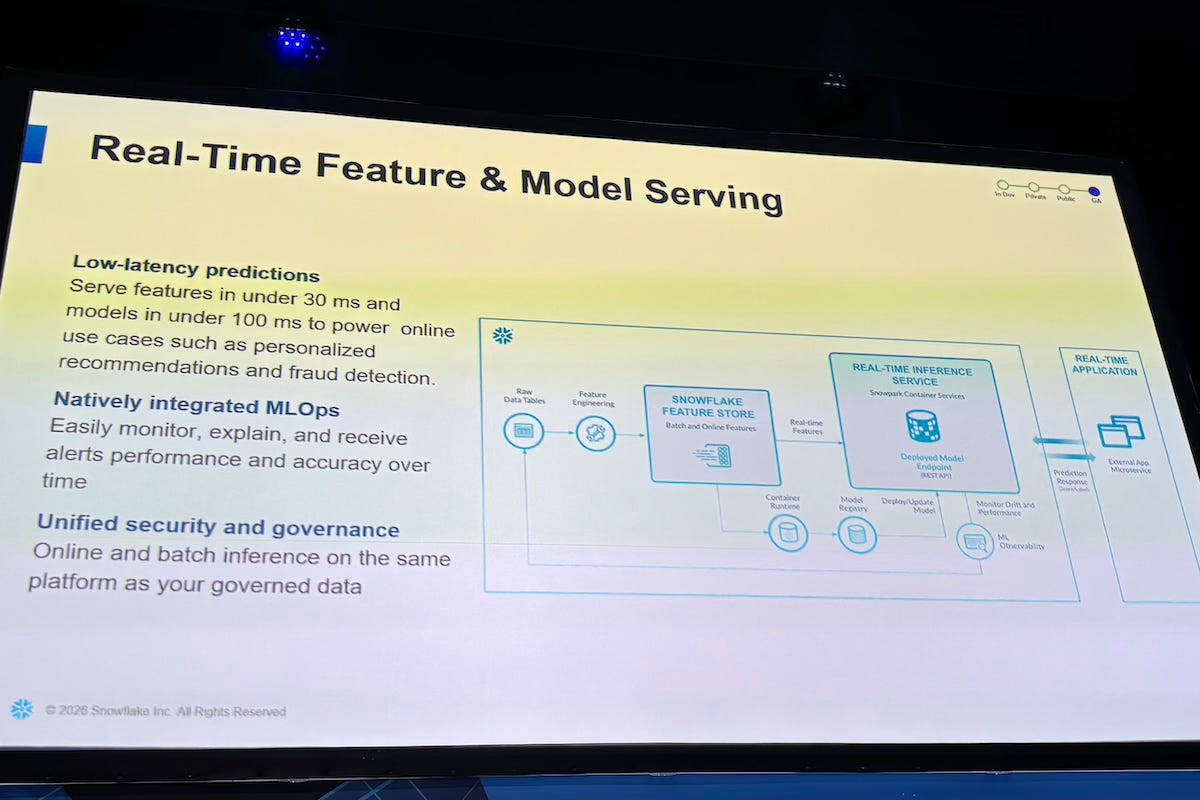

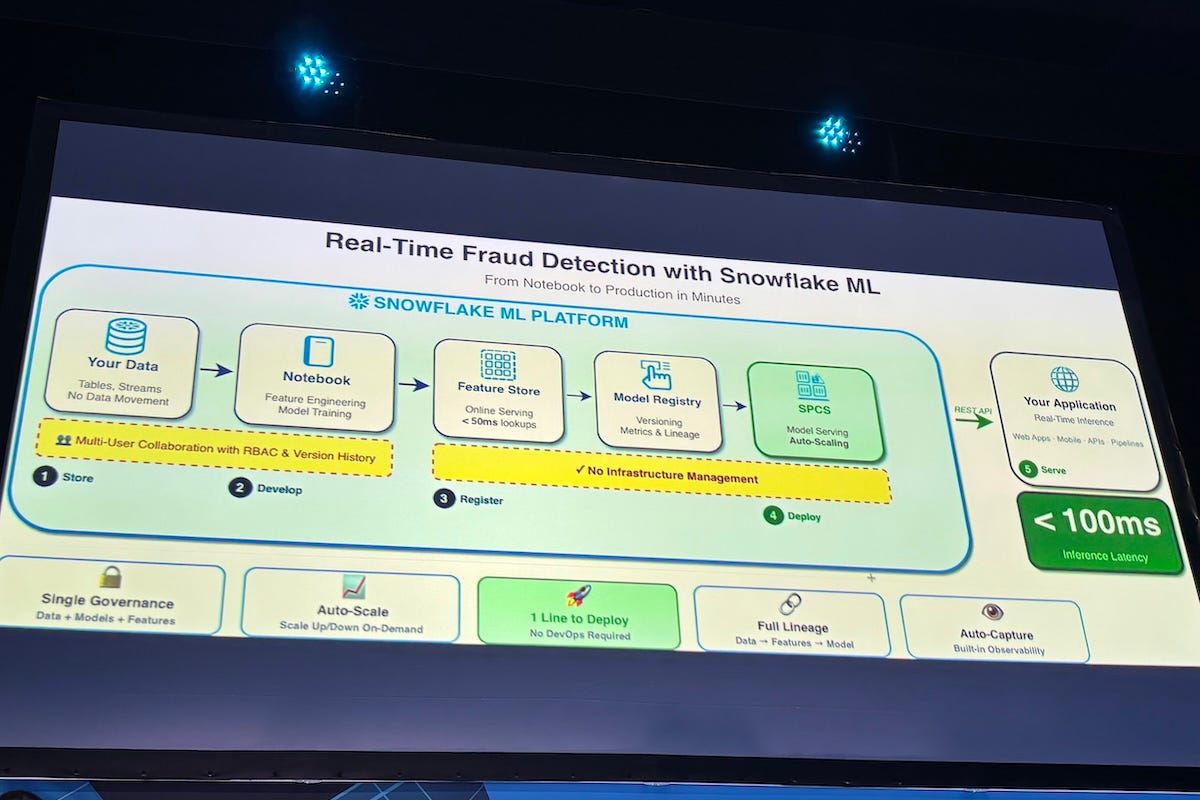

Real-time machine learning is moving from niche experimentation into a core enterprise capability, as organizations seek to make decisions instantly rather than in batch cycles. The shift is being driven by use cases such as fraud detection and personalization, where milliseconds can determine outcomes.

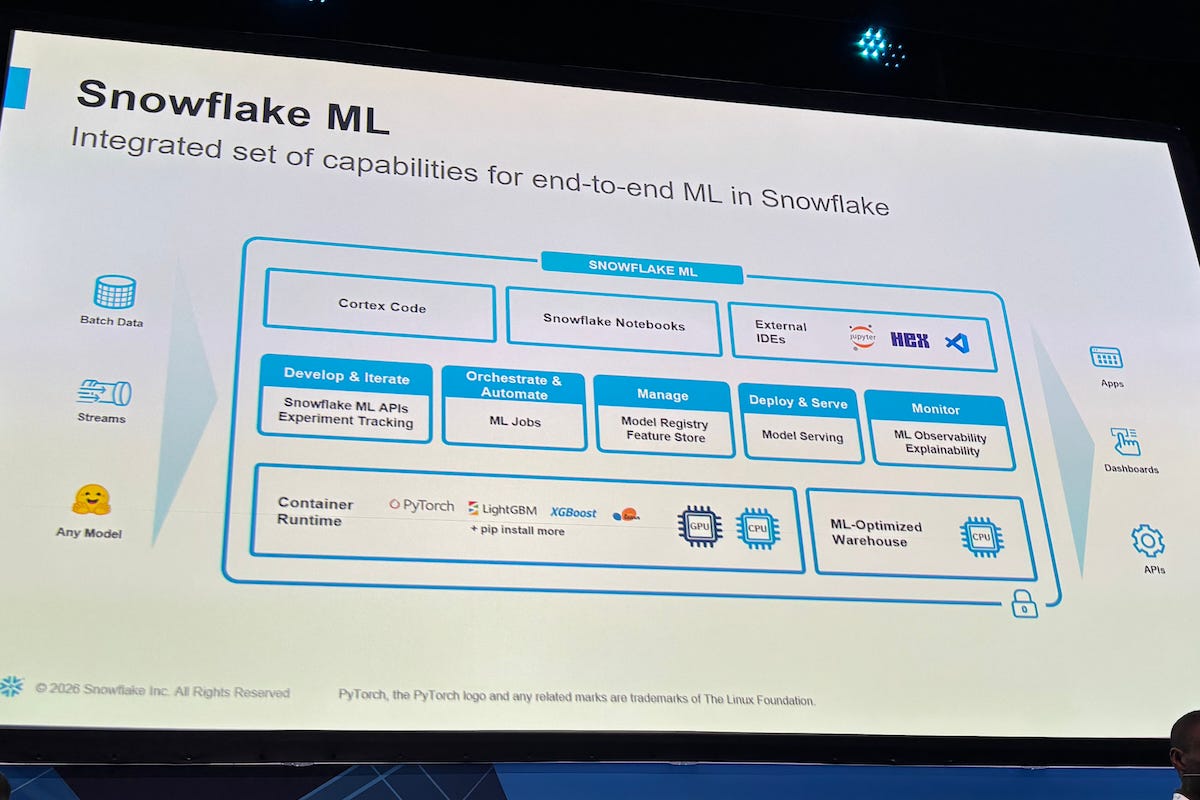

At the same time, enterprises are rethinking how machine learning systems are built and deployed. They are moving away from fragmented toolchains toward unified platforms that integrate data, models, and governance. This convergence is reshaping how artificial intelligence (AI) is operationalized.

“Sometimes you might have a use case that is real-time in nature. What comes to mind is fraud detection or personalization, where you want to meet the customer at the point of need,” said Larry Orimoloye, Principal AI/ML Field CTO at Snowflake. “What this allows you to do is to serve your model at low latency to 10 milliseconds, so you can actually service that model and give decisions in real time.”

The technical barrier to achieving that level of responsiveness has historically been the gap between model development and production deployment. Systems that perform well in testing environments often struggle to deliver consistent, low-latency performance in live settings.

“I could build the best model, train it and get the best accuracies, but when it comes to bringing it into production and hosting it for inferences, that always takes weeks,” said Harley Chen, Solutions Engineer at Snowflake. “Now with this approach, it is really one function, and you can deploy directly into production.”

Real-time shift

At Snowflake BUILD: The Dev Conference for AI & Apps in London on February 5, Orimoloye and Chen outlined how enterprises are moving toward real-time machine learning systems and integrated development environments. The session focused on building, deploying, and scaling online models using a unified architecture.

Snowflake provides a cloud-native data platform that integrates storage, processing, and analytics. Its AI and machine learning capabilities allow organizations to build and deploy models directly within the data environment, without moving data across systems.

The emergence of real-time inference reflects a broader transition in enterprise AI architecture. Models are increasingly expected to operate continuously and respond dynamically to live data streams. This is particularly evident in financial services, e-commerce, and cybersecurity, where delayed insights can translate into missed opportunities or increased risk.

Latency has become a defining metric in this transition. Systems must process large volumes of data while delivering predictions within tight time constraints.

“With our printout of 20 transactions, the overall latency is about 500 milliseconds, including network and authentication, but the per-record latency is under 30 milliseconds,” Chen said. “That is what enables these online use cases to actually work in practice.”

The ability to sustain such performance under load is equally important. Real-world systems must handle bursts of activity without degrading response times.

“I can inject a burst of transactions and run that against the endpoint, and the system will return results almost instantly,” Chen said. “The average inference time is well under 50 milliseconds, even when running multiple requests.”

These capabilities are supported by advances in infrastructure, including distributed computing, containerized workloads, and tighter integration between data storage and processing layers.

Platform convergence

Alongside the push for real-time performance, enterprises are consolidating machine learning workflows into unified environments. This trend addresses longstanding challenges around data movement, security fragmentation, and operational complexity.

“A lot of customers ask why they should build machine learning pipelines directly on a single platform,” Chen said. “You don’t need to move your data back and forth or manage multiple security environments. Your data, your model, your features and your users are all governed under a single role-based access control model.”

The cost and risk associated with moving data between systems have become increasingly difficult to justify, particularly as regulatory requirements tighten and data volumes grow.

“Gone are the days when you need to manage egress or ingress costs or move data across different systems,” Orimoloye said. “Now you have everything within a governed perimeter, which simplifies both security and operations.”

This consolidation enables greater consistency in how models are developed and reused across organizations. Instead of isolated workflows, teams can build shared assets that scale across departments.

“When you build a model, you want to log it once and reuse it across the organization,” Orimoloye said. “That consistency is important when multiple teams are working on the same data and models.”

From experiment to operations

The shift toward unified platforms is also addressing a persistent bottleneck in machine learning: the transition from experimentation to production. Historically, this process required rebuilding models, reconfiguring environments, and coordinating across multiple teams.

“Moving machine learning from experimentation to production should not require rebuilding everything from scratch,” Orimoloye said. “The goal is to go from development to serving live traffic quickly and reliably.”

Integrated environments embed key machine learning operations (MLOps) capabilities directly into the development workflow. These include model tracking, feature management, and observability.

“You can have full transparency around what dataset was used to train the model, and as you iterate, you can see how the model evolves over time,” Chen said. “That visibility is critical when you are deploying models at scale.”

Observability has become a central requirement as models are deployed in dynamic environments. Teams need to understand how models behave over time to maintain accuracy and trust.

“One of the things you can do is look at drift and understand which features are influencing your model,” Orimoloye said. “You can drill down into specific segments to see how performance changes across different parts of your data.”

The ability to trace model lineage and data transformations is also gaining importance, especially in regulated industries.

“You can see not only the versions of the model, but also the metrics and the lineage of those models,” Chen said. “That helps teams understand how models evolve and what data was used.”

Toward agentic workflows

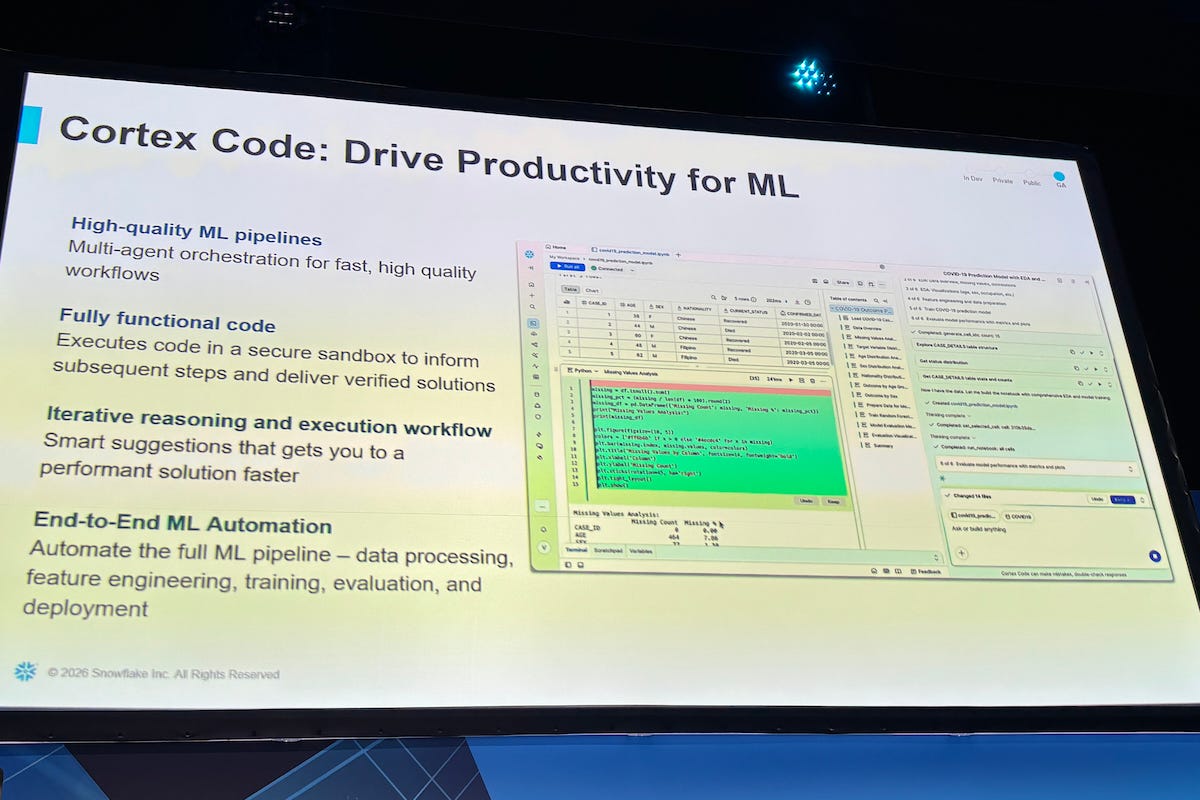

Another emerging trend is the use of AI systems to automate the development of machine learning pipelines. These agentic approaches are beginning to reshape how engineers interact with data and models.

“This is very powerful and a game changer,” Orimoloye said. “You can prompt it to build an end-to-end pipeline, use it to debug your code, or generate workflows using natural language.”

Rather than manually stitching together workflows, developers can rely on systems that decompose tasks, plan execution steps, and generate code.

“It starts to break the overall task down into different steps and plans how to approach each step,” Chen said. “Then it builds the assets step by step, almost like a structured to-do list.”

These capabilities suggest a future where machine learning development becomes more accessible and less dependent on specialized expertise.

Scaling enterprise AI

The convergence of real-time inference, unified platforms, and automated workflows is accelerating enterprise adoption of AI. Organizations are embedding machine learning into core business processes rather than treating it as isolated experimentation.

“One of the recurring themes we hear from customers is that the platform is scalable,” Orimoloye said. “You have the power of CPUs and GPUs, flexibility, cost efficiency, and a highly trusted environment that can support production workloads.”

As enterprises scale AI deployments, the focus is shifting from individual models to systems that operate reliably across the organization. Delivering fast, governed, and reusable machine learning capabilities is becoming a defining factor in competitive advantage.

The transition is still underway, but the direction is clear. AI is moving toward real-time decision-making, supported by integrated architectures that reduce complexity and enable scale.