DeepMind uses AI simulation to accelerate nuclear fusion development

A leading AI lab has deployed a machine learning agent on a live fusion reactor, and is now building tools to shrink the timeline to commercial fusion power

Fusion energy has long been slowed by the pace at which researchers can safely test new ideas on real machines — each experiment costly, each failure hard to interpret. Artificial intelligence (AI) is beginning to change that equation, not by replacing the physics, but by moving the riskiest steps into simulation first.

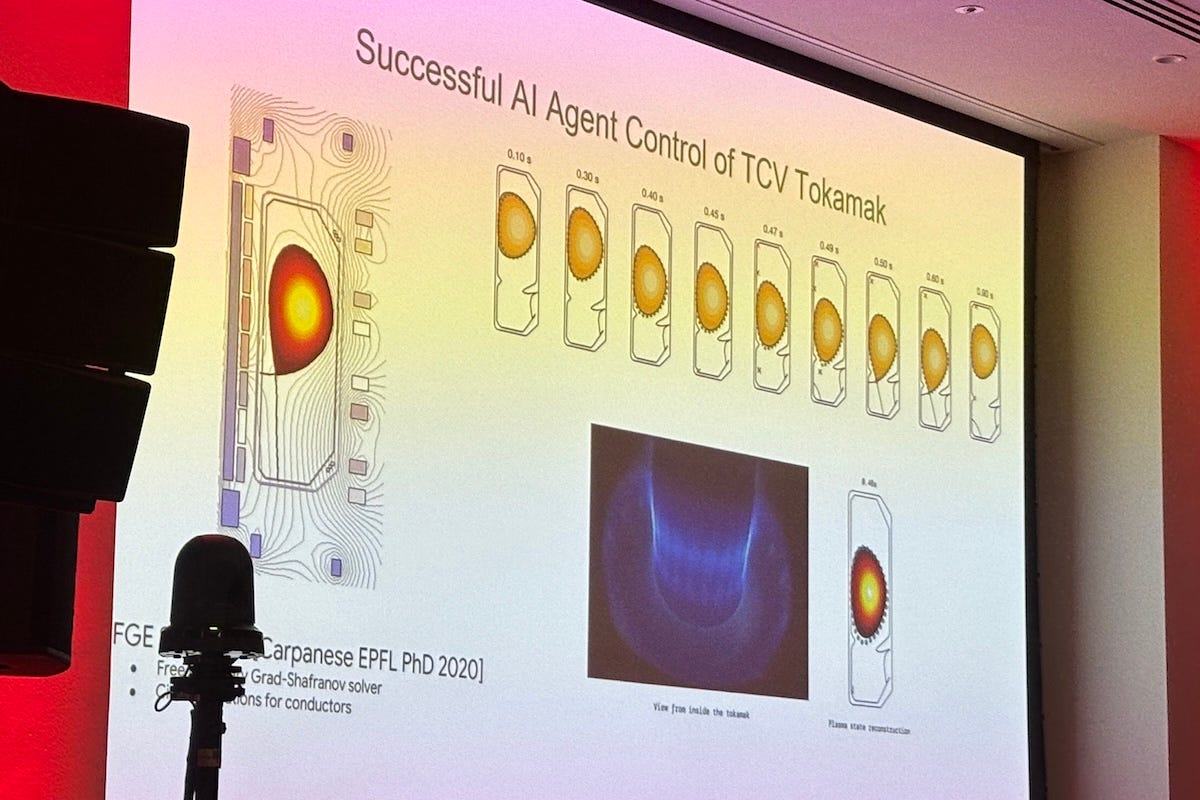

The approach has already cleared a significant milestone: a reinforcement learning agent, trained entirely in a virtual environment, was deployed on a live tokamak and demonstrated it could shape and hold a plasma with a precision and speed that conventional control systems struggle to match.

“We’re not going to take an untrained AI system and just put it on a tokamak straight away. That’s a really bad idea,” said Brendan Tracey, senior research engineer at Google DeepMind and lead of its fusion team.

“A tokamak plasma is similar to balancing a bar on your hand, except on TCV, they have 19 control coils trying to keep the plasma in place, and the plasma is unstable, so the controller acts at 10,000 times per second in order to keep it there,” he said.

The Variable Configuration Tokamak (TCV) is a research nuclear fusion reactor operated by the Swiss Plasma Center (SPC) at EPFL (École Polytechnique Fédérale de Lausanne) in Switzerland. DeepMind partnered with SPC to use AI to control the TCV — one of the most flexible tokamaks in the world, capable of producing a wide variety of plasma shapes, making it an ideal testbed for novel control approaches.

The team trained their reinforcement learning system on a simulator called FGE, effectively a flight simulator of the magnetic environment inside the tokamak, running thousands of experiments before deploying the agent on the real TCV control system.

Traditional control techniques require clever design and real-time observers for evaluation. They are effective, but the complexity involved makes them difficult to adapt quickly to new plasma configurations — precisely the kind of flexibility that an AI-trained agent can provide.

After deployment, the system demonstrated its ability to produce a range of plasma shapes, including the first-ever droplet stabilization on TCV. The work, carried out in collaboration with Federico Felici at SPC, resulted in a publication in Nature.

“We saw how much power really came from having a really good flight simulator, and what that did to enable the AI to solve this problem,” Tracey said.

DeepMind’s science unit, within which the fusion team sits, aims to use AI to advance scientific disciplines and tackle major societal challenges. Its portfolio spans weather prediction at fine temporal and spatial scales, crystalline structure prediction for materials discovery, a formal mathematics system that recently won a gold medal at the International Mathematical Olympiad, and protein folding — the last of which earned the Nobel Prize in Chemistry in 2024.

Simulation at full speed

Tracey was speaking at Fusion Fest, an event organized by Economist Impact in London on April 14, where he outlined how AI is compressing fusion development timelines by moving experimentation into simulation.

His path to the field was, by his own description, a winding one. He completed a PhD in aerospace engineering at Stanford University, applying machine learning to turbulence modeling at a moment when the technology was transforming from a niche computer science topic into something far larger.

“Machine learning went from this little niche thing that a couple of computer scientists were working on and transformed into the start of what it is today. It was genuinely great to be there while this was all coming about,” he said.

A postdoc at the Santa Fe Institute followed, focused on multidisciplinary optimization and theoretical machine learning. He joined DeepMind shortly after AlphaGo was released, drawn by what he saw as the most exciting work in AI at the time. His first major project outside fusion was improving the control system at LIGO (Laser Interferometer Gravitational-Wave Observatory) — work that demonstrated an AI controller could make the world’s most precise measurement instrument even more precise.

The TCV experiment pointed toward what he believes is the next frontier: a faster, more capable simulator. His team has developed Torax, a simulation of the plasma itself that models how temperature, density and fusion power evolve over time. Written in JAX, a programming language developed at Google originally for machine learning, Torax runs natively on graphics processing units (GPUs) and tensor processing units (TPUs).

“We can get simulations now in a minute at an accuracy that used to take more like an hour. That is really a powerful change,” Tracey said.

The speed gain does more than save time. Because Torax can run thousands of simulations rapidly, the team can map the full range of possible plasma outcomes rather than relying on a single prediction.

“We can simulate not just one prediction of what’s going to happen, but quantify our uncertainty in what’s going to happen, and run thousands of simulations to try to build towards safe and reliable pulses,” he said.

He said Torax can also natively ingest machine learning models, meaning that for physical phenomena harder to model with traditional equations, a learned model can be embedded directly into the simulator. The team is simultaneously using historical experimental data to calibrate Torax and improve its predictive accuracy over time.

SPARC in the crosshairs

Despite the promise of simulation, Tracey is clear-eyed about its limits. He says holding two contradictory beliefs at once is precisely what hard problems require.

“When we’re doing something as hard as fusion, you have to adopt mutually contradictory viewpoints in order to get the best possible outcome,” he said.

The team must simultaneously strive for the most complete simulator possible and accept that a perfect one is unattainable — an assumption that requires rigorous uncertainty quantification and openness to updating models as new data arrive.

That same philosophy shapes how DeepMind thinks about AI and traditional control methods. Rather than treating them as rivals, he said the field will need to find the most effective blend of advanced AI and reliable conventional systems.

A collaboration with researcher Sophie Gournaud illustrated the point: she was studying how plasma shape affects fusion performance and needed a path to a specific shape that proved difficult to find conventionally. DeepMind’s system identified a trajectory to reach it, which Gournaud’s team then encoded into a traditional control system and enacted on the tokamak.

“We were able to get a lot of the interpretability and reliability from these traditional control methods, while also taking advantage of the AI to use the exploration pipeline to get us there,” Tracey said.

Newer AI tools are expanding what the exploration pipeline can do. AlphaEvolve, a DeepMind system that searches in the space of computer programs rather than just numerical parameters, opens up fresh possibilities for designing experiments and interpreting their results, potentially accelerating the iterative loop between simulation, real-world experiment and updated prediction.

The most concrete test of these tools is now underway.

Last October, DeepMind announced a collaboration with Commonwealth Fusion Systems (CFS) to apply Torax and its related systems to SPARC, CFS’s planned tokamak.

“We are working with them now to predict SPARC before it comes online. We hope and plan to be there as SPARC comes online, helping them understand how to learn about the tokamak as quickly as possible,” Tracey said.

With SPARC’s construction advancing, the pressure to compress learning cycles has never been greater. Whether simulation can deliver on that promise fast and accurately enough is the question DeepMind and CFS are now racing to answer together

.