Carrier: Europe’s AI Data-Center Expansion Accelerates as Global Buildout Widens

AI demand drives larger data-center projects across Europe and emerging regions as operators race to deploy high-density computing infrastructure

Europe’s artificial intelligence (AI) infrastructure is entering a rapid expansion phase as cloud providers and colocation companies begin building larger data centers to support high‑performance computing and generative AI workloads.

While the United States still dominates the scale of new AI facilities, Europe is beginning to follow with a wave of projects across the United Kingdom, Germany, France and several emerging markets where power availability and sustainability policies are shaping the next generation of digital infrastructure.

“In Europe, you’re building 20‑, 30‑, or 40‑megawatt data centers, while in the US you’re seeing 100‑megawatt buildings and even gigawatt campuses,” said Christian Senu, Vice President, Global Data Centers at Carrier. “The scale in the US is hard to compare apples to apples.”

“The speed of ramp‑up in Europe is coming a little bit after the ramp‑up in the US,” Senu said. “But Europe still has a lot of cloud computing and AI growth ahead.”

Europe’s expansion reflects the broader shift toward AI infrastructure worldwide as governments, cloud providers and enterprise operators race to deploy computing capacity capable of supporting increasingly complex machine‑learning models.

As operators build larger AI facilities, demand for advanced cooling technologies is rising rapidly.

Carrier, the US climate‑technology company founded in 1915 by Willis Carrier, the inventor of modern air conditioning, supplies cooling systems, building automation platforms, and digital management tools used in mission‑critical facilities, including hyperscale and colocation data centers. Reliable thermal control is essential for high‑density computing infrastructure.

Europe buildout

Senu told TechJournal.uk in an interview during Data Centre World at Tech Show London on March 5, where industry leaders gathered to discuss the rapid expansion of AI infrastructure and the technologies required to operate next‑generation computing facilities.

He said the European market is evolving quickly as large colocation operators begin announcing projects measured in hundreds of megawatts of capacity.

“There have been a lot of announcements from large colocation providers saying they will build 100‑megawatt or multi‑100‑megawatt sites in Europe over the next few years,” Senu said. “Those projects are starting to ramp.”

Europe’s largest data‑center markets remain central to this expansion. However, operators are exploring additional locations as electricity supply, land availability, and sustainability regulations influence infrastructure planning.

Senu said new opportunities are emerging across southern and eastern Europe as developers look beyond established hubs to support long‑term growth.

Countries such as Portugal and Poland are attracting new projects because they offer energy resources and physical space suitable for large-scale digital campuses.

Nordic countries are also drawing significant attention due to their strong renewable‑energy supply and policies encouraging the reuse of waste heat from data‑center operations.

He said the Nordic region has been particularly influential in promoting sustainability standards requiring operators to recycle heat generated by servers and other computing equipment.

Those requirements are increasingly shaping how companies design new AI facilities across the continent.

Beyond Europe, AI infrastructure growth is spreading across Asia and the Middle East as governments and technology companies invest heavily in digital capacity.

“Asia is very strong,” Senu said. “India is a great emerging market, and we already have opportunities and projects developing there.”

The rapid adoption of artificial intelligence across financial services, telecommunications and cloud computing is pushing demand for high‑performance computing capacity in several Asian economies.

Australia and Japan are also emerging as important markets as regional companies seek to host advanced AI workloads closer to users and enterprise customers.

“You can look at Australia, parts of Asia such as Japan, and the Middle East,” Senu said. “In Saudi Arabia and some of those countries, there are very large projects underway.”

Carrier operates manufacturing facilities, engineering laboratories, and service teams across multiple regions, allowing the company to support customers building data centers in different markets.

“We have factories and engineering labs in each region, and we can support each region from any region,” Senu said. “That global footprint allows us to serve customers wherever the data‑center demand emerges.”

He said China also remains an important market for digital infrastructure, although cooling systems and facility designs there can differ from those used in Europe or North America.

Local conditions and regulatory requirements often shape how equipment is deployed and integrated within data‑center environments.

AI cooling shift

The rapid rise of AI workloads is forcing a technological shift in how data centers manage heat generated by powerful processors and graphics chips.

“For AI systems, you need more cooling because the chips run hotter,” Senu said. “That requires a more effective heat‑transfer method, which is why liquid cooling becomes important.”

Traditional cloud data centers relied largely on air cooling, circulating large volumes of chilled air through server halls to remove heat produced by computing equipment. However, the density of AI processors used to train and run advanced models has increased dramatically in recent years.

“A traditional cloud data center can often be cooled with air,” Senu said. “But as chip density rises, liquid cooling becomes necessary.”

Liquid cooling systems circulate water or specialized coolant directly through equipment or heat exchangers, allowing facilities to remove significantly more heat than air‑based systems.

“The heat transfer capability of water versus air is very significant,” he said. “Water can remove much more energy from those chips.”

This transition is creating new opportunities for companies developing advanced cooling equipment and thermal‑management systems for hyperscale computing environments.

Efficiency economics

As operators deploy increasingly large AI clusters, energy efficiency has become one of the most important economic factors shaping data‑center design.

“When people talk about data centers, they focus on the billions of dollars committed to construction,” Senu said. “But operators will run those facilities for far longer than it takes to build them.”

The long operational life of data centers means electricity costs often exceed initial construction spending over time.

“The real question is the operating cost of the data center,” he said. “That comes down to power usage effectiveness and how much energy is needed to cool the chips.”

Power Usage Effectiveness (PUE) measures how efficiently a data center uses electricity by comparing the total energy consumed by the facility with the energy delivered directly to computing equipment.

“Being as close as possible to a PUE of 1.1 is considered best in class,” Senu said. “That means more of the power goes to computing rather than cooling.”

Reducing cooling energy allows operators to allocate more electricity to servers performing AI calculations.

“If you reduce the energy required for cooling, you allow more of that power to go to the computer itself,” he said.

Carrier is positioning its technology around integrated thermal‑management systems designed to optimize efficiency across the entire data‑center environment.

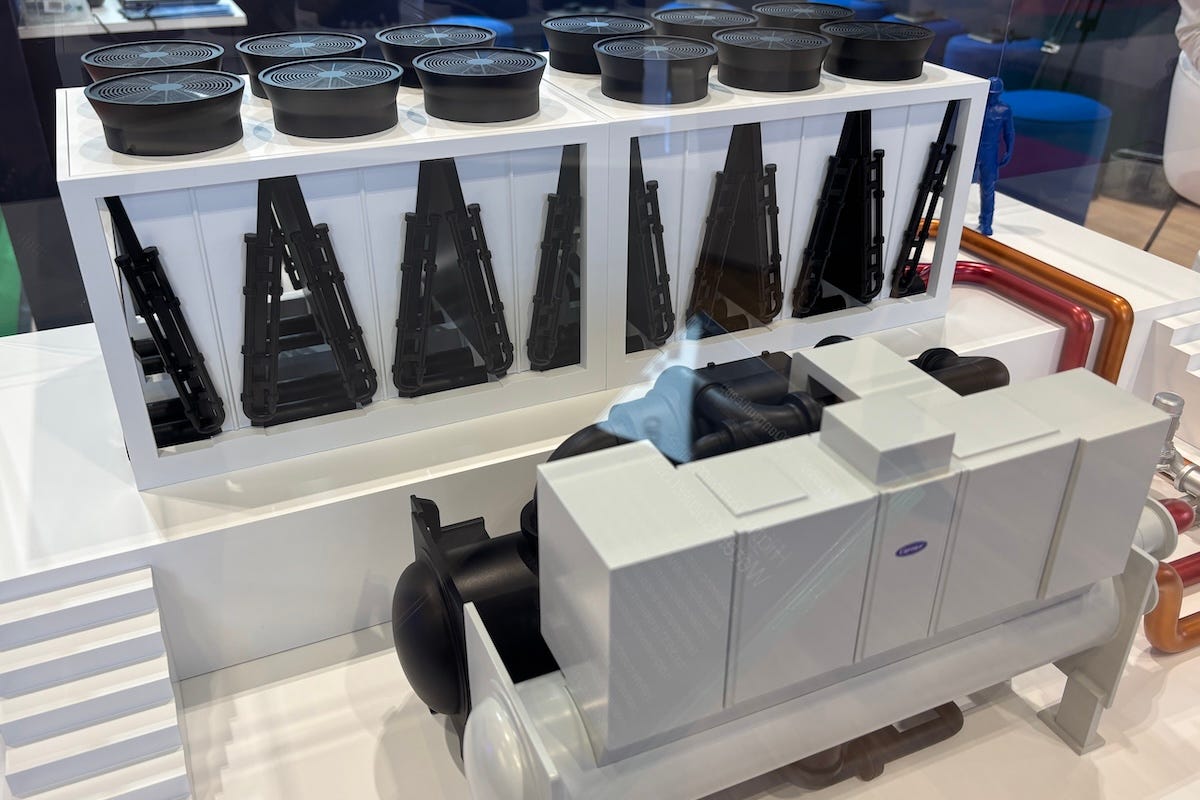

At the center of this approach is Carrier’s QuantumLeap platform, which integrates cooling hardware, controls and digital management tools for modern data centers. The system links chillers and liquid‑cooling equipment to building-automation and data-center-infrastructure management software, enabling operators to monitor and optimize thermal performance across a facility.

“QuantumLeap is our end‑to‑end thermal management solution,” Senu said. “It integrates technologies that used to exist as separate businesses into one system.”

The platform combines cooling hardware with digital management tools to control temperatures and monitor operations across data‑center infrastructure.

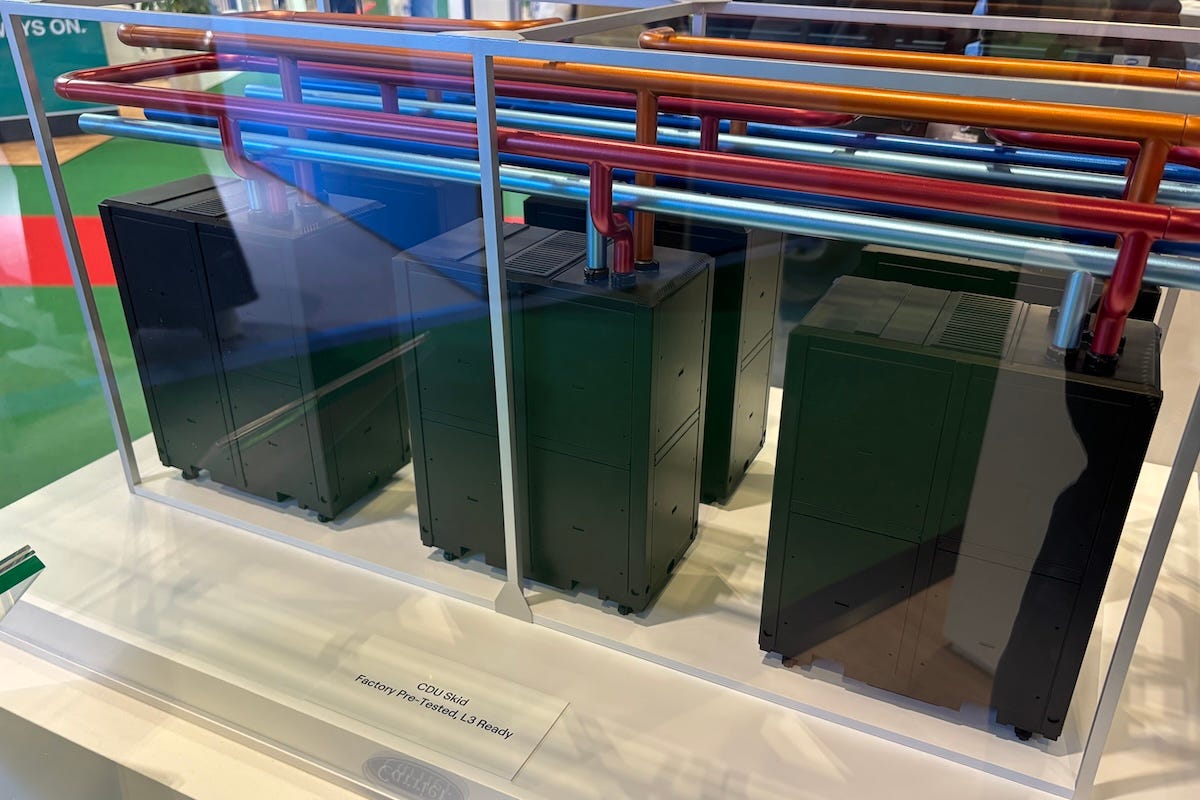

“We combine chillers, coolant distribution units (CDUs), building automation systems and data‑center infrastructure management software,” he said. “That allows us to manage thermal energy across the entire data center.”

Senu said CDUs regulate the flow of liquid coolant used to remove heat from high‑density server racks and connect the server equipment with the broader cooling system.

“Our value is not just in one product,” he said. “It’s how we integrate those technologies into a complete system solution.”

He said linking cooling equipment to monitoring software and building‑management platforms allows operators to track energy use across a facility and identify opportunities to improve efficiency. The approach is designed to support thermal management from individual chips to the facility’s central cooling systems.

Digital optimization

Software and AI are also beginning to influence how data centers are designed and operated.

“We are implementing digital twins to model the data‑center system,” Senu said. “That helps illustrate to customers what the efficiency and PUE will look like before the facility is even built.”

Digital‑twin models allow engineers to simulate how cooling systems behave under different workloads and environmental conditions. This helps operators evaluate infrastructure choices before deployment and recommend system configurations based on location, IT load, and other parameters.

“These tools help us recommend the best solution based on location, IT load and other parameters,” he said. “They also help identify opportunities to innovate in the products themselves.”

Advanced analytics and real‑time monitoring are expected to play a growing role in improving the energy performance of AI data centers. Over time, software systems will increasingly analyze operational data and adjust cooling strategies automatically as workloads change.